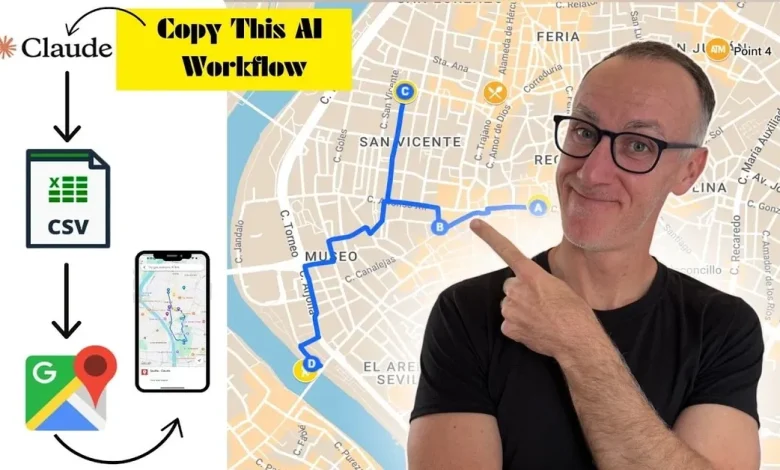

Can AI Plan Your Perfect Route? Testing Modern Algorithms in the Wild

The Promise Sounds Simple Enough

Type in a destination. Hit go. Arrive perfectly.

That’s the pitch, anyway. And for millions of people every day, it mostly works. Google Maps reroutes around a fender-bender before you even know it happened. Waze crowdsources brake lights from strangers three miles ahead. Apple Maps quietly learned, after years of embarrassing wrong turns, to at least get you to the right city. On the surface, AI-powered routing feels like a solved problem one of those quiet technological victories we’ve already stopped noticing.

But spend enough time actually testing these systems in the real world, and the cracks start to show. Not catastrophic failures, usually. More like the unsettling sensation of watching something very intelligent make a very human mistake.

What “Optimal” Actually Means

Here’s the thing nobody tells you when they talk about routing algorithms: optimal is a loaded word. Optimal for what? Fastest arrival time? Shortest distance? Least fuel consumption? Lowest probability of getting stuck behind a school bus at 3:15 on a Tuesday? These are genuinely different objectives, and they often pull in opposite directions.

Classical routing algorithms Dijkstra’s, A*, their many descendants were built around a single metric. Find the shortest path between two nodes in a graph. Clean, elegant, mathematically beautiful. The problem is that real roads aren’t graphs. They’re living systems. A stretch of highway that takes eleven minutes at 9 AM takes forty-three minutes at 5:30 PM and somewhere around eight minutes at 2 AM on a Sunday. The graph keeps changing, and the algorithm has to change with it.

Modern AI routing systems handle this through a combination of historical traffic data, real-time sensor feeds, and increasingly, predictive modeling. Your phone doesn’t just know that the freeway is slow right now it’s making a probabilistic guess about whether it’ll still be slow in twelve minutes when you’d actually get there. That’s a fundamentally different kind of intelligence than finding the shortest path. It’s closer to forecasting weather than solving a math problem.

When the Algorithm Meets the Actual World

Last spring, I spent three weeks deliberately stress-testing navigation apps across different environments dense urban grids, rural two-lanes, suburban sprawl, and one genuinely confusing stretch of coastal highway where the cell signal dropped every four minutes like clockwork.

The urban results were impressive in ways I didn’t expect. In Chicago’s Loop during a weekday afternoon, Google Maps routed me down an alley I’d never have found on my own, shaved six minutes off a crosstown trip, and correctly predicted that a particular intersection would clear up within two light cycles. That’s not map-reading. That’s pattern recognition operating on a scale no individual human could replicate.

Rural performance told a different story. On a two-lane state highway in central Tennessee, Waze confidently directed me onto a gravel road that, while technically passable, was clearly not designed for anything other than farm equipment and optimistic SUVs. The algorithm had found a shortcut. It had not found a good shortcut. The distinction matters more than it sounds because the data that feeds rural routing is thinner, less frequently updated, and far more dependent on user reports that may be months old.

This is where the gap between algorithmic intelligence and local knowledge becomes genuinely uncomfortable. A person who grew up in that county would know, without thinking about it, that the back road floods after heavy rain, that the bridge has a weight limit nobody enforces but everyone respects, that the “shortcut” adds fifteen minutes of anxiety for two minutes of saved time. That knowledge doesn’t live in any database. It lives in people.

The Crowdsourcing Paradox

Waze built its reputation on the idea that collective intelligence could outperform centralized data. Millions of drivers, each reporting conditions in real time, creating a living map that updates faster than any official source could manage. And it works often brilliantly. During major events, natural disasters, or sudden infrastructure failures, crowdsourced routing can adapt in minutes rather than hours.

But collective intelligence has a collective blind spot: it reflects the behavior of whoever is actually using the app. In practice, that means Waze’s data is richest in affluent, tech-forward urban and suburban areas, and thinnest in rural communities, lower-income neighborhoods, and anywhere the user base skews older. The algorithm isn’t biased in any intentional sense. It’s just faithfully representing an unrepresentative sample.

There’s a second-order problem, too. When an algorithm routes enough drivers down the same “optimal” path, that path stops being optimal. Residential streets that Waze discovered as cut-throughs have become genuine traffic nightmares in cities from Los Angeles to Leonia, New Jersey where the town eventually tried to block app-driven traffic with physical barriers. The algorithm optimized for the individual and inadvertently degraded the system. It’s a tragedy of the commons written in GPS coordinates.

What Machine Learning Actually Changes

The newer generation of routing systems the ones built on deep learning rather than classical graph algorithms are genuinely different in kind, not just degree. They don’t just find paths; they learn preferences. Over time, Google Maps builds a model of how you specifically drive: whether you prefer highways or surface streets, how you respond to toll roads, whether you consistently ignore its suggestions on a particular commute and take the same alternate route anyway.

This personalization is either reassuring or slightly unnerving, depending on your disposition. The system is learning you. It’s building a predictive model of your future behavior based on your past choices. When it works, it feels almost telepathic the app suggesting the route you were already planning to take before you even opened it. When it gets it wrong, there’s something faintly absurd about arguing with software that thinks it knows you better than you know yourself.

The deeper question is whether learned preferences actually make routing better, or whether they just make it more comfortable. There’s a real difference. An algorithm that always gives you the route you’d choose anyway isn’t optimizing it’s flattering. True optimization sometimes means suggesting the counterintuitive path, the one that feels wrong until you’re already there.

The Navigation Problems AI Still Can’t Solve

Certain categories of routing challenge remain stubbornly resistant to algorithmic solutions. Construction zones with irregular hours. Roads that technically exist but are seasonally impassable. Private property that appears traversable on satellite imagery but very much is not. Parking a problem so chaotic and hyperlocal that even dedicated parking apps struggle to crack it consistently.

There’s also the problem of the unknown unknown. AI routing systems are excellent at handling situations they’ve seen before, in some form, in their training data. They’re considerably less reliable when confronting genuinely novel conditions a road closure caused by an event that started twenty minutes ago, a detour that’s only existed for three days, a situation where every available route is compromised simultaneously. These edge cases are exactly when you most need reliable navigation, and exactly when the algorithm is most likely to fail you.

Human drivers handle novelty through improvisation, local knowledge, and the kind of low-level environmental reading that we don’t even consciously recognize as intelligence. We notice that the cars ahead are slowing in an unusual pattern. We see the utility trucks and guess there’s work happening around the corner. We ask the person at the gas station. None of that is in the training data.

Living With Imperfect Intelligence

None of this is an argument against using navigation apps. The aggregate evidence is overwhelming: people who use GPS routing make fewer wrong turns, spend less time lost, and in aggregate probably drive more efficiently than people who don’t. The technology is genuinely useful in ways that compound over millions of daily trips.

What it isn’t, and what the marketing sometimes implies it is, is a replacement for judgment. The best use of an AI routing system isn’t passive surrender it’s a conversation. You bring the local knowledge, the contextual awareness, the ability to notice that the suggested route passes through a neighborhood you know floods badly in rain. The algorithm brings the real-time data, the pattern recognition, the ability to process a thousand variables simultaneously.

The interesting question isn’t whether AI can plan your perfect route. It’s what “perfect” means once you start interrogating it and whether any system, human or algorithmic, can fully account for the difference between the fastest path and the right one.

Some roads, it turns out, you still have to learn yourself.